The AI Reckoning: Navigating the Four Fractures of the Generative Era

The Artificial Infrastructure Trap

The Artificial Infrastructure Trap

A Gnaedinger Consultancy White Paper

PART 1: THE DARK GPU TRAP

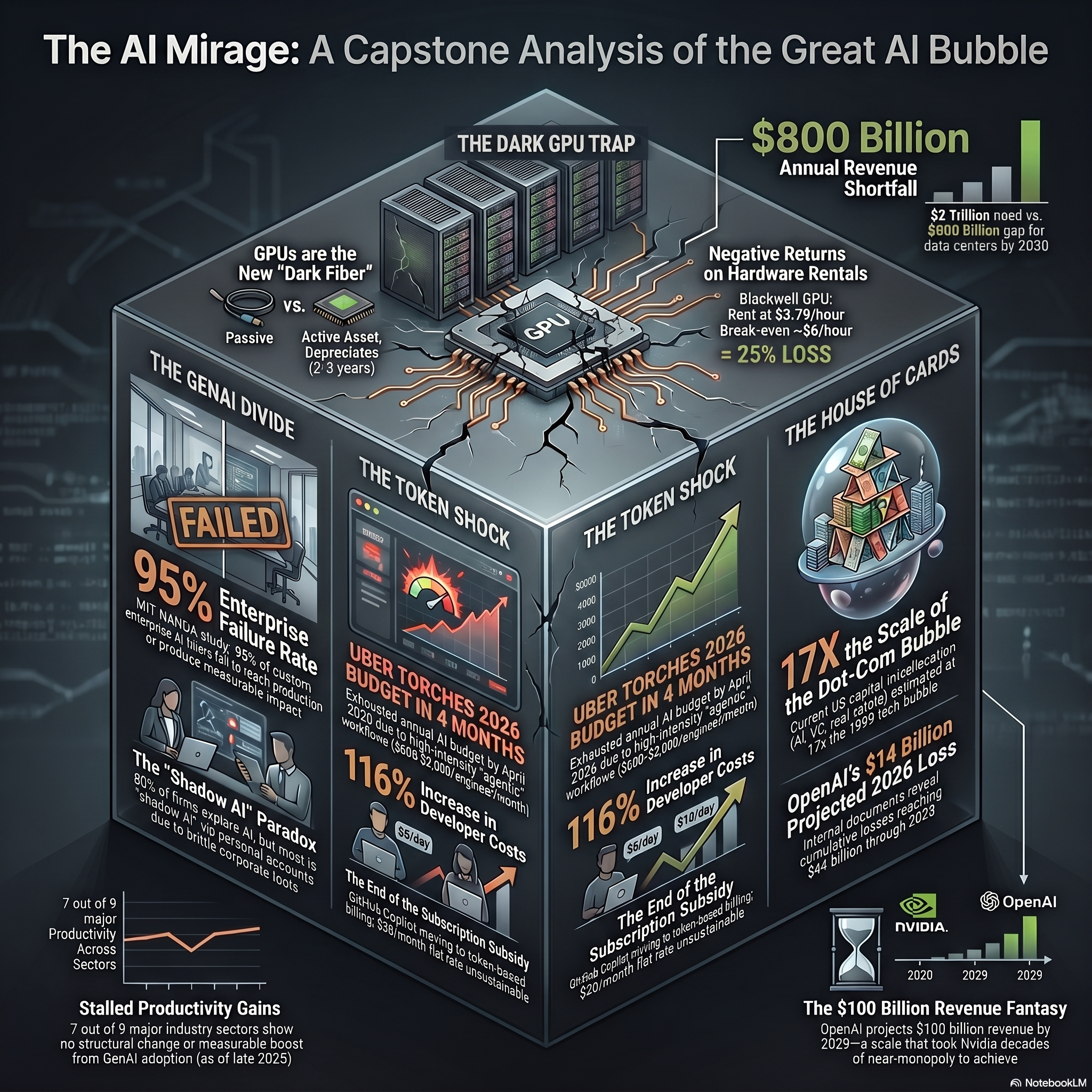

In the late 1990s, telecommunications companies made a catastrophic miscalculation. Believing internet traffic was doubling every 100 days, companies raced to bury more than 80 million miles of fiber optic cable. When demand failed to materialize at that projected pace, the model collapsed, leaving 85% to 95% of that infrastructure sitting unused as "dark fiber".

Today, we are witnessing a structural parallel, but the scale is staggering. Macro Strategy Partnership characterizes the current AI bubble as the most dangerous in history, calculating the misallocation of capital to be roughly 17 times larger than the dot-com bubble.

Hyperscalers are currently pouring over $300 billion a year into AI infrastructure. To sustain this breathtaking pace of compute investment through 2030, a recent Bain & Company report estimates that the AI industry needs to generate $2 trillion in annual revenue. The problem? Current generous projections suggest the industry will only produce about 1.2trillion, leaving a massive∗∗800 billion annual shortfall**.

Many argue that the dot-com crash was survivable because the "dark fiber" eventually powered the modern internet. But this assumes GPUs are like fiber optics. They aren't. Unlike passive glass cables, GPUs are purpose-built hardware with a useful lifespan of just two to three years before becoming obsolete. They depreciate rapidly, and every day a data center full of these chips sits underutilized, massive operating costs pile up against revenue that hasn't arrived yet.

PART 2: THE TOKEN SHOCK

The unit economics of enterprise AI are quietly failing. Flat-rate subscriptions are being replaced by token-based billing, and the costs are spiraling out of control.

Uber's CTO recently announced that the company burned through its entire 2026 AI budget in just four months. What caused this massive shortfall? A surge in the use of Anthropic's agentic AI tool, Claude Code. By the end of the first quarter, 92% of Uber's 12,000 software engineers were using the tool, generating over 15 million lines of code suggestions weekly. The shift to "agentic" workflows requires massive amounts of tokens. Engineers at Uber were generating API costs between $500 and $2,000 per person, per month.

Across the industry, developers are running multiple AI agents in parallel. In extreme cases, a single employee has been reported to spend over $150,000 a month on AI tokens. Even Anthropic quietly doubled its own official cost estimates for average Claude Code users to $150-250 per developer per month.

This leads to a terrifying realization for the enterprise. Bryan Catanzaro, VP of Applied Deep Learning at Nvidia, recently admitted that for his team, the cost of compute is far beyond the costs of the employees. If the cost of AI tokens reaches parity with the cost of the human labor it is supposed to automate, the entire business case collapses. You are no longer automating work; you are just rerouting your payroll costs to hyperscalers.

PART 3: THE GEN AI DIVIDE

Despite billions being spent, a 2025 MIT Media Lab report found that approximately 95% of enterprise generative AI implementations fail to produce a measurable impact on profit and loss. Why? Because custom enterprise AI tools often lack persistent memory and fail to adapt to complex, day-to-day workflows.

Furthermore, companies are misallocating their funds. Roughly 70% of AI budgets are being spent on highly visible sales and marketing tools. However, the most measurable ROI is actually found in back-office operations like procurement automation and code modernization. For example, Amazon saved $260 million annually and 4,500 developer years just by using AI to update old Java applications.

But here is the most fascinating finding from the MIT report: the shadow AI economy. While official corporate AI pilots stall, employees are secretly getting the job done themselves. The research reveals that while only 40% of companies have purchased official LLM subscriptions, workers from over 90% of organizations regularly use personal AI tools to automate their daily tasks. Employees know what good AI feels like, making them completely intolerant of brittle, over-engineered corporate systems. The shadow AI economy is currently vastly outperforming official enterprise implementations.

PART 4: THE HOUSE OF CARDS

The entire AI ecosystem's valuation is tied to the success of a few labs whose business models are fundamentally unproven and highly fragile. Let's look at the undisputed leader: OpenAI.

According to internal financial documents, OpenAI projects a staggering $14 billion loss in 2026. Between 2023 and 2028, they expect cumulative losses of $44 billion. Currently, the company anticipates spending approximately $22 billion this year against roughly $13 Billion in Sales. That is 1.69 in spending for every single dollar of revenue generated.

OpenAI recently missed its internal targets for user growth and revenue, sending shockwaves through partners like Oracle and chipmakers like Broadcom. To survive and bridge this massive gap, OpenAI is quietly shifting its entire business model. They are now aggressively pivoting to capture $100 billion in digital advertising revenue by 2030. They have already launched a pilot program displaying ads to users on ChatGPT's free and lower-priced tiers.

The AI industry has a reflexivity problem. Valuations drove infrastructure investment, which drove GPU demand, which drove valuations higher. It is a circle, not a foundation. If the timeline slips even slightly—if OpenAI misses its 2029 revenue projection by just 30%—the financial consequences for the interconnected tech giants will not be a bad quarter. It will be a historic cascade.

#ArtificialIntelligence #TechBubble #DataCenters #EnterpriseTech #AIEconomics #FutureOfWork

© 2026 Gnaedinger Consultancy. All rights reserved.