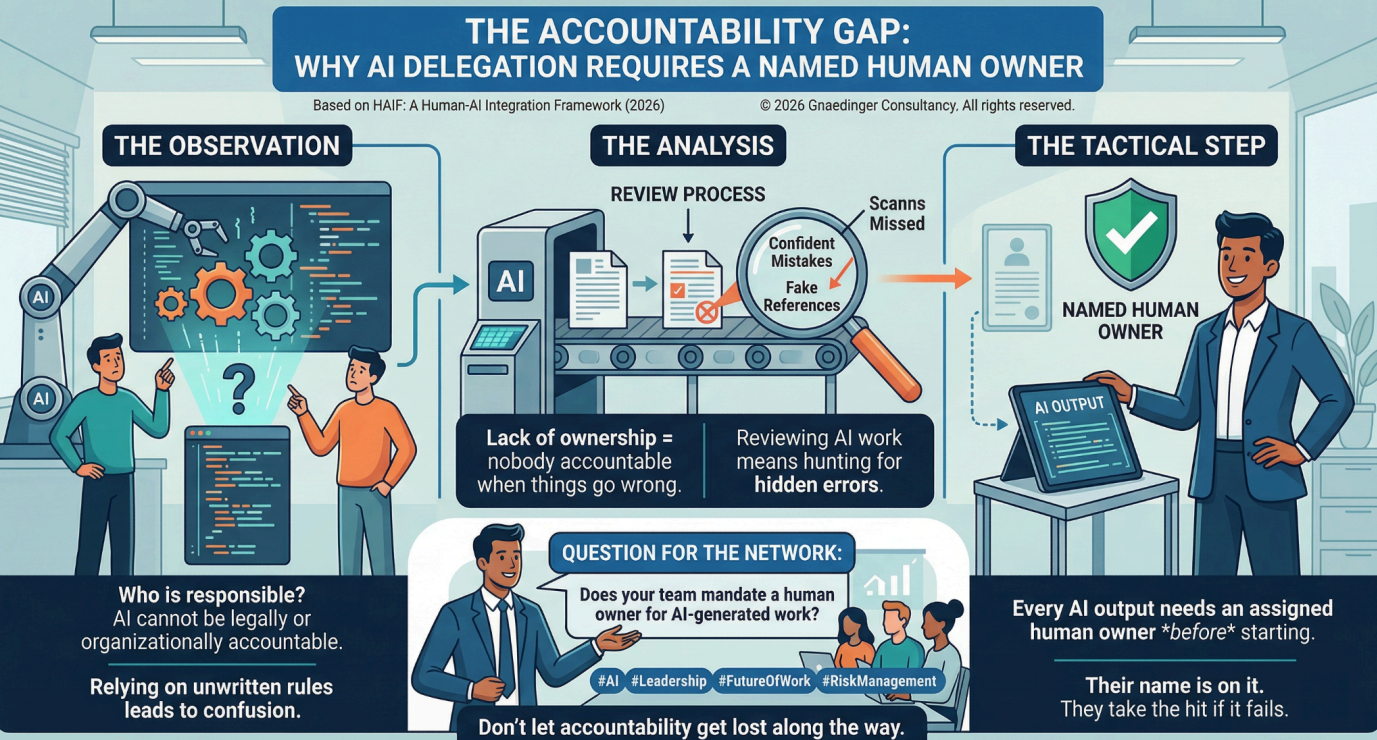

THE ACCOUNTABILITY GAP: WHY AI DELEGATION REQUIRES A NAMED HUMAN OWNER

The Accountability Gap

The Observation:

When AI generates work that ends up in a final product, nobody really knows who is responsible. You cannot hold software legally or organizationally accountable. Most teams just rely on unwritten rules and hope for the best.

The Analysis:

This lack of ownership means nobody is accountable when things go wrong. Reviewing AI work means hunting for confident mistakes and fake references hiding behind good writing. If a vague process takes the blame instead of a specific person, people stop caring enough to actually check the work.

The Tactical Step:

Every AI output needs a human owner. Assign this person before the task even starts. They do not have to personally read every single word the system generates. But their name goes on it. They take the hit if it fails.

Question for the Network:

When your team hands work off to AI, do you mandate a human owner for the final product? Or is accountability getting lost along the way?

#ArtificialIntelligence #OperationalExcellence #Leadership #FutureOfWork #RiskManagement

References: HAIF: A Human-AI Integration Framework for Hybrid Team Operations (2026)

© 2026 Gnaedinger Consultancy. All rights reserved.